The researchers used these structures to perform a simple form of matrix vector multiplication, the fundamental mathematical technique machine-learning models like large language models use to process information and make predictions. The results were more than 99% accurate in many cases.

The researchers still have to overcome many hurdles to scale up this computing method for modern deep-learning models, such as the challenges involved in tiling millions of these structures together. As the matrices become more complicated, the results also become less accurate, especially when there is a large distance between the input and output terminals.

But the technique could also have a more immediate use: detecting problematic heat sources and measuring temperature changes in electronics without consuming extra energy. This would also eliminate the need for multiple temperature sensors that can currently take up space on a chip.

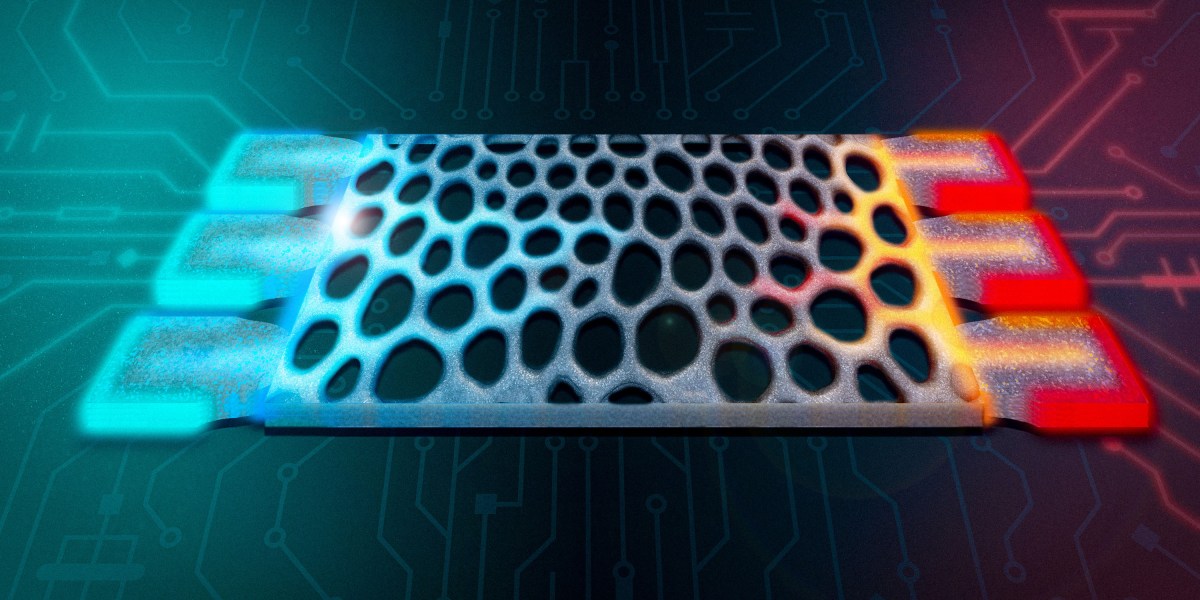

“Most of the time, when you are performing computations in an electronic device, heat is the waste product,” says Caio Silva, an undergraduate student in the Department of Physics and lead author of a paper on the work. “You often want to get rid of as much heat as you can. But here, we’ve taken the opposite approach by using heat as a form of information itself.”

24World Media does not take any responsibility of the information you see on this page. The content this page contains is from independent third-party content provider. If you have any concerns regarding the content, please free to write us here: contact@24worldmedia.com